Claude AI Usage Limits: What Changed and How to Make Them Last

TL;DR: Claude’s usage limits feel tighter in March 2026 because they are. The short version: Anthropic has more demand than GPU capacity right now. Millions of new users arrived as word got around about how good Claude is and especially after the OpenAI Pentagon boycott, the off-peak 2x promotion ends March 28, and multiple Max subscribers report usage meters jumping from under 50% to 100% on single prompts. Below: what the limits actually are, why they’re worse right now, and specific ways to stretch your quota.

Short on patience? Skip straight to the fixes.

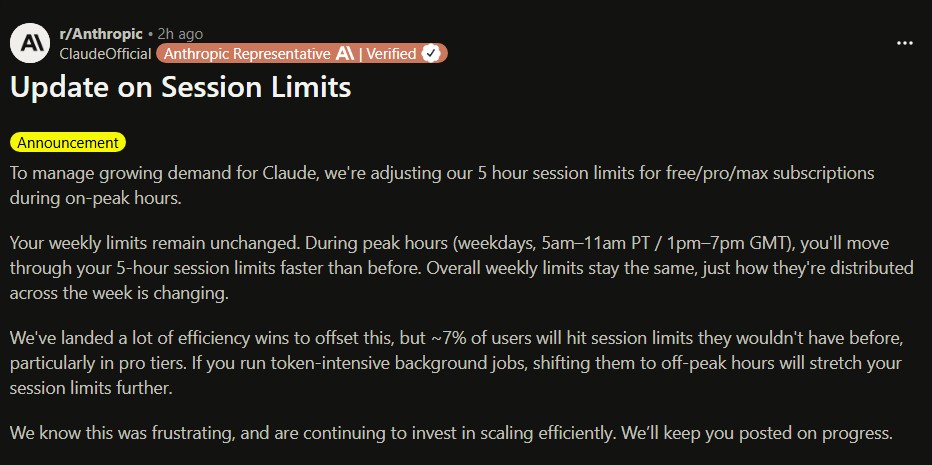

📢 Update, March 26, 2026

Hours after this post went up, Anthropic officially confirmed that session limits are now tighter during peak hours by design. Here’s what they said, in plain English:

- Your weekly total is unchanged. You’re not getting less Claude overall.

- Weekday peak hours now cost more. During 5am to 11am PT (7am to 1pm CT / 8am to 2pm ET), your 5-hour session burns faster than before. The same task at 10am Tuesday may hit your limit sooner than it would at 9pm.

- About 7% of users will notice. Anthropic says Pro subscribers are most affected.

- Off-peak usage is now your friend. If you run heavy tasks, shifting them to evenings or weekends stretches your limits further.

People were not imagining it. The daytime squeeze is real, and now it’s official policy.

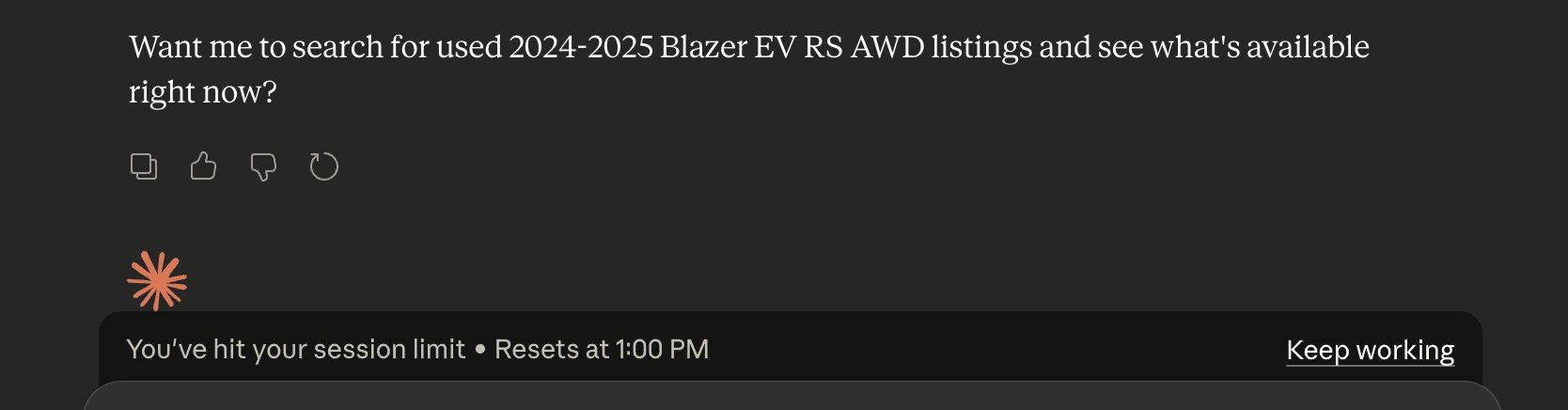

One Question, Zero Answers

My wife is not a developer. She uses Claude the way most people do: ask a question, get an answer or research more, move on. Usually this works quite well and she gets a LOT of good work/tasks done with Claude as an assistant. So when she asked Claude a few questions about used Blazer EVs and immediately hit her session limit, something did not seem right!  One question. One limit. One unhappy user. She then messaged me to ask if she was crazy or if Claude was letting her do much LESS than before. She’s not wrong. The limits are super low for some people right now. We message a bit and this is the conclusion she came to:

One question. One limit. One unhappy user. She then messaged me to ask if she was crazy or if Claude was letting her do much LESS than before. She’s not wrong. The limits are super low for some people right now. We message a bit and this is the conclusion she came to:

But “be smarter about your questions” shouldn’t be the answer when you’re paying for a subscription. And yet here we are.

What Are Claude’s Usage Limits?

Anthropic’s help center breaks it down into two types: Usage limits cap how many messages you can send within a rolling window (roughly 5 hours). When you hit the cap, you’re locked out until the window resets. The exact number of messages varies by model, conversation length, and how many tools Claude uses per response. Length limits cap how long a single conversation can get. Long threads cost more because Claude reprocesses the entire conversation history with every message. A 100-message thread is dramatically more expensive per response than a fresh chat. The limits scale by plan:

| Plan | Price | Usage |

|---|---|---|

| Free | $0 | Limited messages, tighter during peak hours |

| Pro | $20/mo | ~5x free tier |

| Max 5x | $100/mo | 5x Pro limits |

| Max 20x | $200/mo | 20x Pro limits |

One detail most people miss: usage counts across all surfaces. Messages on claude.ai, Claude Code, and Claude Desktop all draw from the same pool. If you burned through tokens debugging code in Claude Code this morning, your wife’s Blazer EV question this afternoon is competing for what’s left.

Why Limits Feel Worse Right Now

As of March 26, Anthropic has confirmed that tighter daytime limits are deliberate. But the full picture involves several overlapping factors, and they all point to the same root cause: demand outran available GPU capacity.

Millions of new users arrived. After OpenAI signed a Pentagon contract in late February, ChatGPT uninstalls spiked 295% in a single day. The QuitGPT movement claimed 2.5 million participants. Claude hit #1 on the US App Store for the first time, surpassing ChatGPT in daily downloads. Anthropic’s web traffic jumped over 30% month-over-month.

That’s a massive influx of new users hitting the same GPU infrastructure. Anthropic’s annualized revenue surged to $19 billion by March 2026, but inference costs scale with every user, every message, every long conversation. Flat monthly subscriptions were already strained before the wave hit.

Here’s the thing people keep missing: this isn’t Anthropic being stingy. You can sign up 100,000 new subscribers overnight. You cannot add 100,000 GPUs worth of inference capacity overnight. When demand jumps faster than compute supply, the gap has to show up somewhere: slower responses, lower quality, or tighter limits. Anthropic chose tighter limits. That’s arguably the least bad option.

The off-peak promotion is ending. Anthropic ran a promotion through March 28 doubling usage limits during off-peak hours. If things felt tight with double limits, they will feel tighter without them.

Something may also be broken. Since March 23, Max subscribers report usage meters jumping from 52% to 91% on single prompts. One Max 20x user ($200/month) watched their meter hit 100% after roughly 90 minutes of normal work. This isn’t the first time: less than a month ago, Anthropic reset Claude Code rate limits after a prompt caching bug caused similar drain. The official announcement explains the deliberate peak-hour tightening, but it doesn’t fully account for the sudden single-prompt meter jumps some users are reporting. Those may be a separate issue.

The Bigger Picture: Why Both Companies Are Making Opposite Moves

While Anthropic is tightening limits under user load, OpenAI is loosening them. That’s not because one company cares more about users than the other. They’re operating under different capacity constraints.

OpenAI is hemorrhaging users and trying to win them back. Their response has been aggressive: GPT-5.4 launched March 5 with native computer-use capabilities, explicitly targeting Claude Code and Claude’s professional user base. They shut down Sora on March 24 after the video app burned an estimated $15 million per day in compute against just $2.1 million in lifetime revenue. That freed-up infrastructure isn’t sitting idle. OpenAI is reallocating those GPU resources toward the professional workflows where Claude has been eating their lunch.

This is a supply-and-demand story, not a morality play. OpenAI has compute it can reallocate. Anthropic has demand outrunning available GPU supply. If you’re the subscriber hitting the wall, that context doesn’t make the experience less frustrating. But it better explains why the product behaves this way right now, and why it’s likely temporary as Anthropic scales infrastructure to match.

What Burns Through Your Quota Fastest

Not all messages cost the same. Understanding what’s expensive helps you budget. Long conversations. This is the biggest one. Every message in a thread gets reprocessed as context. Message #50 in a conversation costs dramatically more than message #1. A 100-message thread can burn through your quota several times faster than the same questions asked in fresh chats. Tool use. Web search, code execution, file analysis, and connected services (Gmail, Google Drive, MCP servers) all consume additional tokens. Claude’s tool calls happen behind the scenes, and a single “search the web for X” can involve multiple searches, page reads, and summarization steps you never see. Attached files and images. Every file or image you upload gets processed as part of the conversation context. Large PDFs or multiple screenshots add up fast. Opus-tier models. More capable models cost more per message. If you’re using Claude Opus for a task that Sonnet could handle, you’re paying a premium in quota.

How to Make Claude Last Longer

If you only change two habits, do these first: start new chats more often and put your full request in the first message.

For Everyone

- 🆕 Start a new chat when you switch topics. This is the single highest-impact change you can make. Long threads get more expensive with every turn. Each topic switch is a chance to reset the context cost to zero.

⭐Likewise, if you come to a natural transition from one phase of a project or research conversation, craft a handover prompt and start fresh on the next phase in a NEW conversation. - 📦 Front-load your context. Instead of five messages building up to your actual question, put it all in one. One dense prompt is almost always cheaper than five setup messages.

- 📁 Use Projects for recurring work. Project instructions get cached on Anthropic’s side, saving you from re-uploading the same context every session. I wrote a full guide on setting up Claude project instructions if you want to get this right.

- 🧰 Turn off tools you’re not using. Web search, code execution, and connectors add token overhead just by being available. Disable them in Settings when you don’t need them.

- 🔀 Use multiple AI models. Keep ChatGPT, Gemini, or a local model available for lighter tasks and second opinions. Getting a second opinion from a different AI often catches blind spots anyway.

- 💳 Enable extra usage if lockouts cost you more than overages. Anthropic’s pay-as-you-go overflow lets you set a spending cap so it doesn’t run away. If hitting the wall mid-task costs you more in lost productivity than a few dollars, this is worth turning on.

Here’s a prompt structure that front-loads everything Claude needs in a single message:

I need help with [task].

Goal: [what success looks like]

Context: [background facts Claude needs up front]

Constraints: [budget, deadline, preferences, things to avoid]

Please return: [exact format you want the answer in]For Claude Code and Heavy Workflows

- 🧹 Use

/clearbetween tasks. Treat each major task like a fresh session. Accumulated context is the silent quota killer. - 🗺️ Use plan mode (Shift+Tab) before implementation. A few seconds of planning is cheaper than trial-and-error tool calls. Let Claude think before it starts writing and executing code.

- 📄 Keep your CLAUDE.md lean. Your project instructions load into every conversation. Stale rules become a recurring token tax on every single message.

- 🚫 Use

.claudeignore. Exclude build artifacts, lock files,node_modules, and anything else Claude doesn’t need to scan. Treat your context window like RAM: load only what you need right now. - ⚙️ Use the API for production workloads. The Anthropic API offers pay-per-token pricing with no arbitrary session windows. For client work or heavy automated workflows, the math often favors the API over a Max subscription.

When starting a new Claude Code task after clearing context:

/clear

New task:

Goal: [what you're building or fixing]

Files to inspect first: [specific paths]

Constraints: [tests that must pass, style rules, deadline]

Output: [what you want back - code, PR, analysis]Should You Upgrade to Max?

Maybe. But go in with realistic expectations. Max 5x ($100/month) makes sense if you’re hitting Pro limits daily and the interruptions are costing you real productivity. Max 20x ($200/month) is for people running Claude Code all day or doing heavy professional workflows.

But even Max 20x subscribers are reporting issues right now. And Anthropic’s announcement confirms that the peak-hour tightening applies to Free, Pro, and Max alike. Upgrading buys you more room, not immunity. If you’re considering the upgrade, I’d wait until after the March 28 promotion ends to see what the baseline experience looks like without the 2x buffer.

Personal note: I’m on a higher-tier plan and use Claude heavily every day, including Claude Code for development work. My own experience has been noticeably better than a lot of what’s being reported online. I don’t know whether Anthropic quietly prioritizes longer-tenured accounts, higher-tier subscribers, certain usage patterns, or something else entirely. That’s speculation, not a claim. But the gap between my experience and the public complaints is real enough that I wanted to flag it. I’ll update this post after March 28 when the promotion ends and we can see baseline limits more clearly.

For many power users, the better move might be a Pro subscription for interactive work plus API access for heavy lifting. That way you’re not dependent on a single plan’s session caps for everything.

Where This Is Heading

The industry is clearly moving toward consumption-based pricing. Anthropic already offers extra usage as an overflow mechanism. OpenAI has been experimenting with similar models. I’d rather pay for what I use than guess at opaque session limits that can apparently drain in 90 minutes on a $200/month plan. In the meantime, we’re in the awkward transition where pricing doesn’t match the product promise. “Unlimited” was never really unlimited. “5x usage” doesn’t mean 5x as much work when different tasks consume wildly different amounts of quota.

Credit where it’s due: Anthropic’s Reddit announcement is a step in the right direction. For most of March, users were guessing about what was happening with limits. Now there are specific numbers (7% of users affected), specific windows (5am to 11am PT weekdays), and a direct acknowledgment that it’s frustrating. That’s more transparency than we usually get from AI companies on infrastructure pain.

Anthropic is building what I believe is the best conversational AI on the market. My wife picked it up with zero coaching and immediately found it useful. That’s a genuine product win. But getting cut off after one question about used cars doesn’t feel like a billing policy. It feels like the tool quit on you.

For now, being strategic about your AI usage isn’t optional. It’s the cost of using the best tools available while the business model catches up to the product. As my wife put it: silly, but manageable. As long as it doesn’t get worse.

Sources and Further Reading

- How Do Usage and Length Limits Work? – Anthropic Help Center

- Usage Limit Best Practices – Anthropic Help Center

- Claude March 2026 Usage Promotion – Anthropic Help Center

- Manage Extra Usage for Paid Plans – Anthropic Help Center

- Update on Session Limits – Anthropic Official (r/Anthropic, March 26, 2026)

- ChatGPT Uninstalls Surged 295% After DoD Deal – TechCrunch

- Claude Max Subscribers Left Frustrated After Usage Limits Drained Rapidly – PiunikaWeb

- OpenAI Is Shutting Down Its Sora Video App – CNN

- GPT-5.4 Targets Anthropic’s Claude – TrendingTopics

Information in this post was accurate at the time of writing (March 2026). AI pricing and limits change frequently. If something here doesn’t match what you’re seeing, drop a comment and I’ll update the post.