The Mother-In-Law Method for Claude or ChatGPT

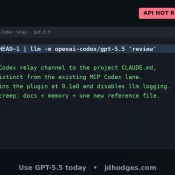

A Reddit post called “The Mother-In-Law Method” is making the rounds in r/ClaudeAI right now. The pitch from u/Ancient_Perception_6: prompt Claude to review your code as if it were written by your mother-in-law, the one who insulted your cooking and your “weird-looking feet.” Find revenge in the diff. Claude obliged, spawned four parallel “hostile reviewers” with distinct beats (money math, tenancy, API contracts, tests), and 31 minutes later returned 27 issues plus nits. Funny post. Funnier thread. It’s tagged as