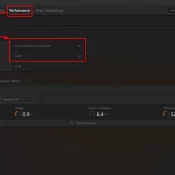

How to Allocate VRAM on AMD Strix Halo for LLMs and AI Workloads

If you have a Ryzen AI Max+ 395 (Strix Halo) system with 128GB of RAM and you’re wondering why your local LLM host (be it LM Studio, Ollama, or whatever) can’t see most of that memory, this is the fix. AMD’s unified memory architecture means your CPU and GPU share the same physical RAM, but Windows needs to be told how much of it the GPU is allowed to use. By default, it’s VERY conservative. More info below and how