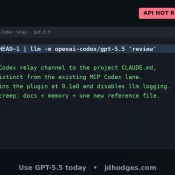

How to Use GPT-5.5 Today at the CLI (Via Your Existing Codex Subscription)

TL;DR: You can use GPT-5.5 today from your terminal by running Simon Willison’s llm-openai-via-codex plugin on top of your existing ChatGPT/Codex login. No new API key, no separate API bill. 💪 OpenAI hasn’t shipped GPT-5.5 to the public API yet (as of 2026-04-24 midday), so this is a pretty sweet clean shell-side path until they do. 🚀 Thank you Simon! I set this up on my dev test box and it took about two minutes end to end. I wrote